Service Mesh: Architecture, Concepts, and Top 4 Frameworks

Learn about the pros and cons of the service mesh pattern, how a service mesh works, and discover the top 4 tools you can use to implement a service mesh with Kubernetes.

What Is a Service Mesh?

A service mesh provides features for making applications more secure, reliable and observable. These features are provided at the platform layer, not the application layer.

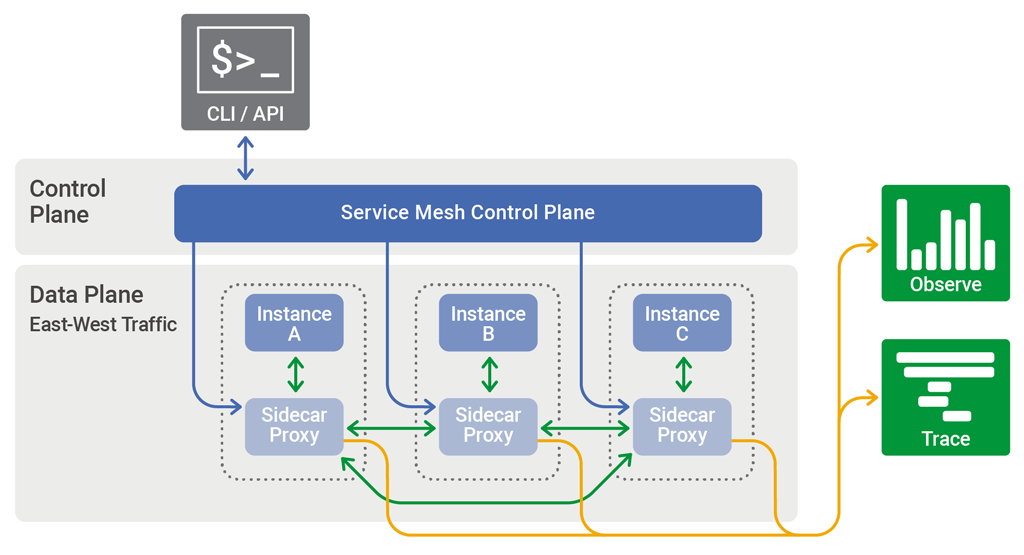

A service mesh is usually implemented as a series of network proxies in a sidecar pattern, with application code governing their behavior. The proxies manage communications between microservices and provide an entry point for introducing service mesh features. Collectively, the proxies form the data plane of a service mesh, which is controlled as a scalable unit from the control plane.

Service meshes have been gaining popularity thanks to the widespread adoption of cloud-native applications. A cloud-native application can comprise hundreds of services and thousands of service instances. These instances are commonly managed by Kubernetes, scheduled dynamically on physical nodes, with their state constantly changing. This makes service-to-service communication extremely complex and directly affects the runtime behavior of an application.

Service mesh technology makes it possible to manage this complex inter-service communication, to ensure reliability, security, and end-to-end performance.

This is part of our series of articles about containerized architecture.

In this article:

Service Mesh Benefits and Drawbacks

Service meshes can address some of the main challenges of managing communication between services, but they also have their drawbacks. Among the advantages of service meshes are:

- Service-to-service communication is simplified, both for containers and microservices.

- Communication errors are easier to diagnose, because they occur within their own infrastructure layer.

- You can develop, test and deploy applications faster.

- Sidecars placed alongside container clusters can effectively manage network services.

- Services meshes support various security features, including encryption, authentication and authorization.

Some of the drawbacks of service meshes are:

- The use of a service mesh can increase runtime instances.

- Communication involves an additional step—the service call first has to run through a sidecar proxy.

- Service meshes don’t support integration with other systems or services

- They don’t address issues such as transformation mapping or routing type.

Service Mesh Architecture and Concepts

There is a unique terminology for the functions and components of service meshes:

- Container orchestration framework—a service mesh architecture typically operates alongside a container orchestrator like Kubernetes or Nomad.

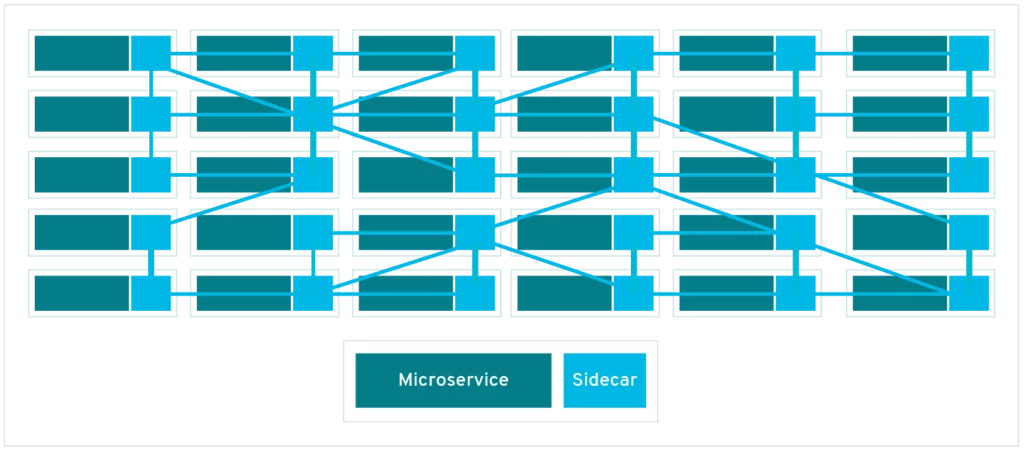

- Sidecar proxies—these run alongside each pod or instance and communicate with each other to route the traffic between the containers they run with. They are managed through the orchestration framework.

- Services and instances—instances (or pods) are running copies of microservices. Clients usually access instances through services, which are scalable, fault-tolerant replicas of the instances.

- Service discovery—instances need to discover available instances of the services they need to interact with. This usually involves a DNS lookup.

- Load balancing—orchestration frameworks usually provide load balancing for the transport layer (Layer 4), but service meshes can also provide load balancing for the application layer (Layer 7).

- Encryption—service meshes can encrypt requests and responses, and then decrypt them. They prioritize the reuse of established connections, which minimizes the need to create new ones and improves performance. Typically, traffic is encrypted using mutual TLS, with the public key infrastructure generating keys and certificates and keys used by sidecar proxies.

- Authentication and authorization. The service mesh can authorize and authenticate requests made from both outside and within the app, sending only validated requests to instances.

- Circuit-breaker pattern—this involves isolating unhealthy instances and bringing them back gradually if required.

The data plane is the part of a service mesh that manages network traffic between instances. The control plane generates and deploys the configurations that control the data plane. It usually includes (or can connect to) a command-line interface, an API and a graphical user interface.

How a Service Mesh Works

Service meshes don’t introduce new functionality to the runtime environment of an application. With any architecture, applications require rules to define how requests are communicated. What makes service meshes different is that they abstract the logic that governs service-to-service communication to an infrastructure layer (as opposed to individual services).

This is achieved by building a service mesh into an application as a set of network proxies.

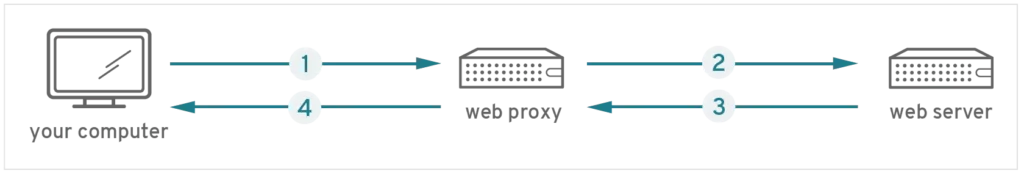

Proxies are common for accessing websites from enterprise devices. Typically, requests for a web page are first received by a company’s web proxy, which checks them for security issues—only then are the requests sent to the host server. Responses from the page are also sent to the proxy for security checks, before being returned to the user.

The proxies in a service mesh are also known as sidecars, because they run alongside microservices, not within them. They route requests between microservices in their respective infrastructure layer. Collectively, the proxies form a network known as a mesh.

If you don’t have a service mesh, you need to code each microservice needs with logic that governs communication between services. Not only is this a highly inefficient use of your time, it also makes it more difficult to diagnose communication failures, because the logic governing service-to-service communication is hidden inside the services.

Top 4 Service Mesh Options for Kubernetes

Here are some of the top service mesh tools you can use to manage communication between microservices in a Kubernetes environment.

Istio

Istio was originally developed by Lyft and was the first Kubernetes-native solution to offer extra features such as deep-dive analytics. It has been backed by many tech companies, including Google, Microsoft and IBM, which use it as their default service mesh for Kubernetes services.

Istio uses a sidecar proxy to cache data, so it doesn’t have to return to the control plane for each call. This allows the data plane to remain separate from the control plane. A control plane is a pod running in a Kubernetes cluster, which provides higher resilience in the event that a pod anywhere in the service mesh fails.

Read our blog about Istio security

Linkerd

Linkerd is probably the next most popular Kubernetes service mesh and is similar to Istio in terms of architecture (since v2). Linkerd emphasizes simplicity over flexibility and is exclusive to Kubernetes, so there are fewer moving pieces, making it less complex overall. Linkerd v1.x, which supports other container platforms as well, continues to be supported, but does not benefit from new features which have primarily been intended for v2.

What makes Linkerd unique is that it is the only service mesh backed by an independent foundation, the Cloud Native Computing Foundation (CNCF), which is also responsible for Kubernetes.

AWS App Mesh

AWS App Mesh facilitates the management and monitoring of control services through a dedicated infrastructure layer that handles interservice communication. App Mesh standardizes the communication between services, providing end-to-end visibility of your applications and supporting high availability. App Mesh offers reliable visibility, with network traffic controls for each service in your application.

Consul Connect

Consul is a service management framework from HashiCorp. It was originally intended for managing services on Nomad but has since grown and now supports several container management platforms, Kubernetes included.

With the addition of Connect (in v1.2), Consul offers capabilities for service discovery that allow it to serve as a complete service mesh. Connect uses agents installed on each node as DaemonSets, which communicate with Envoy sidecar proxies that route and forward traffic.

Zero-Trust Security and Service Mesh

Security is a critical concern for cloud native applications. Many organizations are adopting a zero trust approach, which is especially suited to a cloud native environment. The zero trust security model assumes that there is no network perimeter. It does not trust any entity, even if it is “inside” the network, and requires ongoing authentication and verification of any communication between application components, as well as external communication.

Here are a few ways a service mesh can help implement zero trust security:

- Enforcing Mutual Transport Layer Security (mTLS) for all microservices communication

- Managing identity and authorization for microservices

- Enabling central management of access control and enforcing least privilege for each microservice and role (in Kubernetes, this can be done with RBAC)

- Facilitating monitoring and observability for microservices, to ensure that suspicious access attempts or traffic can be identified and blocked

- Adding controls over ingress and egress, with a micro-perimeter around each microservice

A service mesh can help mitigate the following types of attacks:

- Service impersonation – an attacker pretends to be an authorized service in a private network, and attempts to access other services or gain access to sensitive data.

- Packet sniffing – an attacker infiltrates the private network and intercepts communication between microservices.

- Unauthorized access – careless or malicious insiders attempt an unauthorized request from a legitimate service to another service.

- Data exfiltration – an attacker inside a protected network transfers data from a protected resource to a location outside the network.

Service Mesh Security with Aqua Security

Aqua security solutions can be deployed in a service mesh environment, whether it’s based on Istio and Envoy proxies, or Conduit and LinkerD proxies. The security solutions are transparent to the service mesh environment and the container firewall rules can be used to enforce network security rules in parallel with Envoy or LinkerD policies.