Istio, Google’s open source project for large scale, containerized application management was released in May 2017 and has undergone rapid development since then, culminating in the landmark 1.0 release in July 2018. In this blog post we will be exploring what Istio is, how it works and how to adopt it. In subsequent articles in the series we will be talking about the security and traffic control features of Istio.

What is Istio?

Over the past few years, a number of open-source projects have been released that reflect how Google has internally built, deployed and managed large-scale containerized distributed applications. Istio represents the last portion of that stack – the application. Istio’s Google origins are relevant to better understand some of the design decisions, and the historical context.

Netflix have spoken at length about their chaos engineering practices, as well as concepts such as fault injection, circuit-breaking, throttling and tracing. In order to have these features without requiring each application developer to re-implement them for each new project, they need to be made available in the underlying network. There are two ways of achieving this:

- You could build these features into networking libraries and use them across your organization whenever network requests are made (Netflix/Hystrix), in all languages that your company uses, and maintain them for all your services and teams.

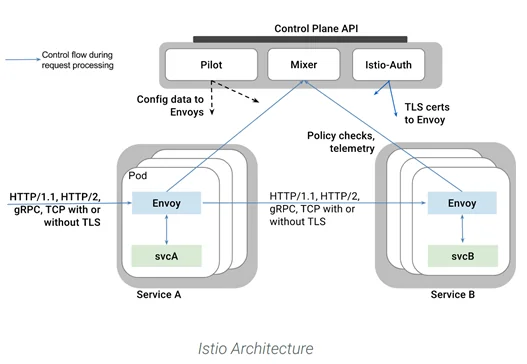

2. Have the network provide those features transparently, by using a service mesh. This is the Istio approach. Istio achieves this by leveraging Envoy proxy, which runs as a sidecar within each pod and gets dynamically reconfigured by the Istio control plane, as can be seen in the diagram below:

It is this Envoy sidecar pattern that allows Istio to be a drop-in solution that doesn’t require modifications to the application. Containers are configured to send all their networking through the Envoy proxy which is dynamically reconfigured with policy from the Istio control plane. This allows Istio to provide features like mutual TLS, throttling and circuit breaking transparently.

Istio is more than just a service mesh, it contains one other key concept upon which Istio is built: service identity. Just as users are authenticated in a system to ensure they are who they claim to be, so too can services have identity and can likewise be authenticated. This allows us to enforce role-based access control (RBAC) between services and generally have more control over what services can do in our network.

We’ll be discussing Istio largely in the context of Kubernetes, although it is also possible to run Istio on VMs, and even to extend the Istio service mesh across Kubernetes clusters and VMs.

What are the benefits of adopting Istio?

- Out of the box Microservices Telemetry: Your microservices will automatically have telemetry surfaced through Istio, giving you a unified view of application metrics and traces without having to do any instrumentation.

- Mutual TLS – Istio can be configured to apply mutual TLS (mTLS) to all inter-service communications without having to change the application. An in-cluster CA will provide each Envoy proxy with the requisite certificates to secure inter-service traffic

- Red/black deployments – by dynamically reconfiguring the traffic split between”old” and “new” versions of an application during a deployment, you can safely and gradually roll out a release to production, observing the cluster for errors throughout

- Expressive network policy – whilst Kubernetes provides RBAC for access to its API and network policy for inter-service traffic policy, with Istio we have RBAC for services and a richer language for expressing network policy that allows restriction of HTTP verbs and paths instead of just IPs

Application developers can focus on delivering business value at Layer 7 rather than spending time writing overlapping solutions to infrastructure problems.

Istio architecture

Istio consists of a control plane of several management components, and a

collection of services running with an Envoy sidecar proxy that is reconfigured

by the control plane. The control plane is made up of the following components:

- Pilot: Manages and configures all the Envoy proxy instances, including applying traffic routing policies and RBAC configuration

- Mixer: Telemetry collection and some additional real-time authorization checks not handled by Envoy

- Citadel: Citadel is the Certificate Authority that issues and rotates the TLS certificate

- Galley: The Galley is a behind-the-scenes component of little relevance to us. It is concerned with gathering and validating user configuration to the other components of the system

- Proxy: Envoy proxy runs as a sidecar to every Kubernetes pod, providing dynamic service discovery, load balancing, TLS termination, RBAC, HTTP and gRPC proxying, circuit breaking, health checks, dynamic rollouts, fault injection and rich metrics

- Gateway: The Gateway describes an edge load balancer that allows ingress or egress for the cluster. Ingress rules are configured using route rules, like any Istio component

Adopting Istio: lessons learned from the trenches

Whilst adopting Istio will give you immediate benefits, unlocking its full promise depends on having a well-designed microservice architecture. Building a successful microservices system – with many small services built by multiple teams – requires a level of organizational and operational transformation that is too often left out of the discussion.

Having said that, Istio can still be of benefit regardless of your application’s design, or its maturity. Increased observability can help to untangle a problematic microservices design without having to change application code to understand the problems. Adopting Istio is therefore beneficial as part of a migration, re-architecting or consolidation project, but really shines when adopted in the context of a well-designed microservice project. Bear in mind, however, that adding any new component, including Istio, into a system will increase operational complexity.

Installing Istio involves installing the control plane components, as well as configuring your Kubernetes pods to include Envoy and direct all traffic through it. The Istio CLI tool istioctl has a command kube-inject` that will modify your YAML files to include Envoy in your pods. Another way is to use the Istio mutating webhook admission controller to automatically add Envoy to pods at deployment time. Either way, once Isto is installed and Envoy sidecars are running, you have all the benefits of Istio without the application knowing anything about it.

It is recommended to first start with a “silent” Istio installation and adopt the various features piecemeal, lest you introduce too many changes at once and find debugging them difficult. The Istio team actually promote what they call “Istio a la carte”, by which the mean that you needn’t use all of Istio at once. Many people find the default telemetry alone to be hugely beneficial as a starting point for adopting Istio.

Istio and Security

Where Istio really shines is service identity, RBAC and end-to-end mutual TLS. Future posts in this series will explore these aspects in more detail.

Conclusion

Istio’s choice of a service mesh design, rather than the library approach of Hystrix, makes adoption and maintenance easier. Istio has the potential to transform operations and security for your microservices deployment, but in order to get the most from it you require a well-designed microservices architecture. Even the most legacy of systems will benefit from the increased security and telemetry provided by Istio out of the box.

Note: This is a guest blog post by Luke Bond, previously with Control Plane.