Code to Cloud to Prompt: Protecting Private AI Deployments

AI workloads are showing up in CI/CD pipelines, Kubernetes clusters, and air-gapped environments, but most teams don’t realize how exposed they are. Models, agents, and RAG components introduce new attack paths that slip past standard scanners and runtime tools.

In this session, Aqua Security Field CTO Tsvi Korren explains where those risks exist inside containerized AI stacks and what practitioners can do about them right now. You’ll learn how to trace AI components from code to container, detect prompt and model abuse at runtime, and apply proven container security techniques to protect local AI deployments.

What you’ll learn:

• How AI code, models, and tools expand your container attack surface

• Practical steps to secure AI pipelines without slowing delivery

• How to use runtime visibility to detect real-world AI threats in production

• Ways to extend your existing controls to cover on-prem and edge AI workloads

In this session, Aqua Security Field CTO Tsvi Korren explains where those risks exist inside containerized AI stacks and what practitioners can do about them right now. You’ll learn how to trace AI components from code to container, detect prompt and model abuse at runtime, and apply proven container security techniques to protect local AI deployments.

What you’ll learn:

• How AI code, models, and tools expand your container attack surface

• Practical steps to secure AI pipelines without slowing delivery

• How to use runtime visibility to detect real-world AI threats in production

• Ways to extend your existing controls to cover on-prem and edge AI workloads

Transcript

Hi, everybody. Welcome to this session.

We are going to talk about AI and how we can protect local AI deployments.

As you're probably aware, AI is the hottest trend in the market nowadays, and it doesn't just divide, right? We know what the numbers are. The numbers are that there is a lot of push towards AI in organizations. We see upwards of ninety percent of organizations that are actually able to think about what their AI strategy is. Not that they have a lot of advancement there, right? Only five percent of organizations actually do have a clear plan, but everybody thinks that they need to do something with AI.

And what we're seeing though is that security and specifically the governance around AI is lagging, right? We have statistics that tell us that many organizations still don't have a plan on how to tackle AI deployments. If they're done locally, we still have very little runtime controls. We have very little governance even though the regulation is starting to creep up and we have now some frameworks that we can talk about with regards to how we wanna posture our AI, but the end result is that AI is here. AI deployments are here. There are a lot of use cases for them. And in the next thirty minutes or so, we're gonna break down what are the problems that we wanna solve and also what are the solutions or how the solution should look like if we're going to have a good secure AI deployment.

But before we get into that, why do we even wanna have AI in an organization? What's the benefit? Everybody tells you that we need to support it, but what are really the use cases?

So there are actually three kind of main broad based use cases around AI. One is the one I think that we're most familiar with, which is the ability to do some chat with an AI chatbot. So that would be your ChatGPT, your Grok, your Gemini, your Copilot, and so on. And this is between the user and the AI engine. It's a very valid use case. There's a lot to say about that, but the purpose of this presentation is actually the other two use cases, is what happens when we start to develop applications that start to make use of AI components. And it could be that the application itself is using AI to do some backend processing, or it could be that the application is actually providing a natural language interface and then users are able to interact with the software instead of clicking checkboxes and prompts and wizards. We are actually talking to an application like we would to a human that might need to give us that service.

But when we do that, we got to remember that the basic flow of AI, the way that we see it from our lived experience, talking to a chatbot, is actually a little bit more complex. If we think that we're just asking AI a question, so we give it a prompt, the prompt has a inference server that the information is being given to, and then the AI model is the one that's generating the response, this three step process is actually very, very rudimentary, right? Our lived experience is not indicating everything that actually goes on behind the scenes.

The reason is that the model itself, if we talk about what is an AI model, it's really like a brain in a box, right? It knows what it knows, it knows what it was trained on, but it really doesn't know the specifics of a particular organization or a particular company unless you train it specific for that company. So if you're gonna use an AI infrastructure, one of the things that you may have to ask yourself is, am I gonna use a generic model and then try to augment the input, or am I gonna train the model just for me?

And this is really, really important because if you don't train the model and you provide an application, let's say it's a banking application, and you're trying to make the user do something like transfer money from checking to savings, if you just give this prompt to a generic AI that hasn't been trained or hasn't been augmented with the specifics of the organization that you're talking to, if you're like, let's say the bank that wanna provide a service, you're gonna get a very generic response. And if you experience this as a user, right, in your banking application, if I went to my banking application and submitted that prompt and got that response, my experience is not going to be very good. Because I didn't ask how generically to do it, I just wanted the system to do what I asked it to do.

And this is where we got to understand that the prompt that we're sending an AI application, even the simple chatbot, even just a generic ChatGPT in a private window, is still going to have a lot of decoration and information around it. Because the prompt that you're going to send in our fictional application will eventually at the bottom have what the user requested, but at the top, there is a lot of information that is provided to the model around what the user is actually trying to do. It first of all defines the the role of the AI system itself, like your banking application. It defines the role of the user. What is the user allowed or not allowed to do? It defines what tools and agents the AI application is able to use.

When we talk to, if you hear the term agentic AI, that is really just AI taking action as if it was a user and not just providing answers. And if you want the AI to take action, we got to be very, very specific in what action we want the AI to take. So our model is actually getting a lot of information that is not specifically referred to in the prompt, but is inferred by the fact that we have our application is the one that decraps the prompt. So all the things that the model does need, user data, like what is the permitted actions, what is the mood of the user, what is the intent of the user, what tools it can use, what tools it can't use. All of these have to be defined in advance in the context of our application.

So when we're building an AI infrastructure, we are really building not just a prompt to a model, we have a lot of other tools around it that helps us build that model to the extent that it can be used by the application. So that would mean that our prompt might go through a gateway to validate the identity of the user and tell us what it can do and cannot do. There is a lot of context. So if you hear the term MCP, the context protocol for models, that is how we augment the prompt. That is how we provide the prompt with ancillary information that might have changed since it was trained. You might hear the word rag. So that is a way to provide documents and extra stuff that the model can draw on in order to provide its answer. There are tools that can provide us with the ability of the the AI engine to do something in in the real world. And then we have the model itself, and that model, if you're doing it internally and you don't have to host the model internally, but if you even host the model internally, you also have the hardware requirements of the GPU. So it's a little bit more complex than three step process that we saw earlier.

But if you wanna deploy all of these components in house, those are actually gonna translate into quite a lot of, let's face it, Kubernetes deployments. At the end of the day, we're really talking about Kubernetes and we're really talking about how we can protect the infrastructure that you're going to run-in house. So if we're going to translate all of these to a set of Kubernetes deployment, it might look something like this. It might look like a set of deployments that are in Kubernetes. Some of them are going to deal with the application. So the top line is probably something that is more familiar to you as people who write or maybe manage applications in organizations. And then the rest of it is driving the AI process, Documenting the data, making sure that we have all the information we need in order to successfully execute on that transaction.

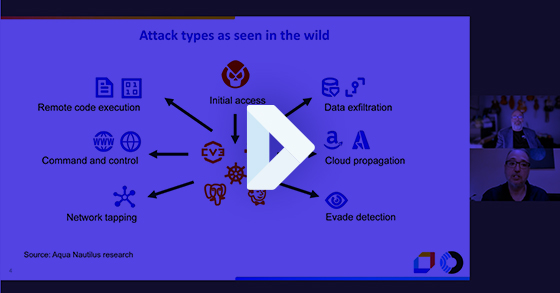

Now there's a lot of infrastructure, right? There's a lot of infrastructure that lives in containers. We know that infrastructure is containers is a target for bad actors that might want to do something. But when we are dealing with AI that is wrapped in containers, there is a way that attackers might be able to go into this environment that is outside the normal ways that we know and probably can deal with when we're dealing with kind of classic security around intrusion detection and just protecting the the infrastructure itself. And and the fact is that that that there are pretty ingenious ways in which ban actors are starting to exploit the way that AI systems are built in organizations.

It means that there could be prompt injections that make use of tools. It could be leaks of data by the model, right? The model, for instance, in the example that we talked about, the bank account might return a reply, Yes, I've successfully transferred money from checking account number this and that from the checking to the savings and reveal the account numbers. Now this is an unintended consequence, but we gotta make sure that that doesn't happen. Right? So so how can you make sure that that your model is not divulging information as as it's doing its its transactions?

And then there there are all these abilities that that that we need to have in order to to safeguard our our AI infrastructure from from attacks. So what are we missing here? Right? There there's a big infrastructure that's that's being put in place both internal and external to the organization. Information is gonna flow between different components of that that that that infrastructure, and it leaves a pretty big gap for security org organizations to to fill inside companies. Because currently there's really very little visibility as to what AI actually does, where does it look, what is it doing in environment. There's really no way to govern AI in its current state, that it follows any kind of policy. Right? We don't have still the ability to impact like system prompts based on organizational policy. There is no real protection against prompt attacks that might be enticing the AI system to do something that it shouldn't, especially if we're using tools. It's very easy to guilt and have emotional manipulation of AI systems to make them do something that they don't want to do.

And if we're going to put security in place because we're running fast, because we have a lot of very cutting edge technology development taken on in many places at once, It's it's really hard to tell developers and the people that that run the AI infrastructures and and the businesses that push for the adoption of these AI infrastructures that they need to stop and and let's just put a lot of security into this. Right? There's a lot of resistance from the business, from the applications team, from the development teams to slow them down and introduce more things.

So there are challenges. There are challenges in the fact that we're not sure what to do as security professionals. This is very new. We might not have the tools in place and we might not even have the political will of an organization to take the, in my opinion, very necessary pause to see how we're actually going to absorb this thing.

Now, it's not all bad news, right? There are very concrete things that security professionals and cloud native professionals can do in order to stabilize and start to govern those AI deployments, but it requires some actions and requires some idea about what we need to do.

So if we're going go over the kind of required capabilities of what AI security might look like, there are some questions that they need to answer. They need to answer, first of all, where is AI being used? Is it used by users? Is it used by applications? Where is it deployed? Is it deployed where we want it to be deployed? Are the right models in place? There's a lot of just needs to understand what is actually in there in the infrastructure. Because most of that infrastructure is going to run-in containerized applications, our first order of business is to make sure that we understand what's in those images. So what what are we even running? What what what AI components are are existing in images? Are we getting images from AI vendors? Like, we getting an NCP, for instance, from Entropic that might have some vulnerabilities in it? Are we doing the most secure configuration that we can around those AI services?

So even when we're building images, when we're building the building blocks of our service by service, we have to understand what are the components that are going in and what is the level of risk of each of those components. And when we put all this together and actually start to provide that application to our user base, then the question is, first of all, how can bad AI actors break it? What are the limits that we can put around our AI services? How can we avoid resource overrun? How can we ensure segregation of data?

If an incident happens in an AI system, right, prompts are very ephemeral. They can be issued by a user and then you get a response, but then the same prompt can be issued by another user and get a completely different response based on context. Right? So how do you manage an incident where the root cause can be either something that is introducing risk on one hand, but can also be very fear of risk on the other hand. We have to have some way of dealing with that.

Now, not all these questions have answers today, but I think the purpose of this session is to start to get you thinking about what are the things that you need to provide as far as capabilities when you are designing your security around AI.

So let's jump right into what's needed. So what's really needed is, first of all, an inventory of the models that are applicable to the organization that wants to use them, what is approved for use in the organization, and really just have an inventory of everything that is running that has AI implications. It could be just analytics around users. It could be what tools are being used. We would really like to get an echo of the prompt. This is a little bit iffy because it might contain some sensitive information, so we gotta do that carefully. But understanding the prompt is absolutely something that is is required if we're gonna have good good AI protection.

We gotta conserve resources. If you're gonna run the inference server or even the model train internally, you gotta manage GPUs. Those are very expensive and the time slices are very precious and we want to make sure that nothing gets wasted. And then the responses, as we mentioned earlier, sometimes the model might divulge some information during the response that is not really applicable to the security and privacy governance that is governing what the application is doing. So all of that are just a way to tell you that we need to have some rules in place. Right? Organizations need to decide which AI systems are appropriate for their environment, in what use case, in what capacity, and then we gotta make sure that the development organization is absolutely endorsing those and that they have some guardrails that if they go beyond them, somebody would know about it and will be able to react.

And the way that we do that is the way that we have to start to look at how those images that carry the AI services are manufactured and are built in the organization. So it has to do with what are the sources of the components, right? Are we running approved operating systems? Are we running the models and all the other components, MCPs, inference servers, all that from trusted sources. We've gotta make sure that we have the right SBOM around them so that we have an inventory of everything that goes into those images, understanding the model, understanding what vulnerability might be at images. A good strategy is to try to have leaner images, right? That's just a good security practice. Right? Having leaner images without bloat and and without too too many components that are not required. And then, of course, if the image is gonna carry any kind of action, it needs to be very contained and the the image configuration, it need to be secured. Right? There's there's absolutely no reason to run any image in an AI capacity or any other capacity as as a root or a privileged user. But with AI, it it is actually really, really important because if we ever trick a prompt to do something that goes beyond what the AI engine can do, especially if it's using tools or agents, having them run as privileged users is is a very risky proposition because the prompts are nondeterministic, and we really don't know what's gonna happen if if a prompt is issued. So we really got got got that could contain it upfront.

So we talk about two things. Right? We talk about policies. We talk about the the ability to understand what is required and not required in the in the environment and then how we start to control it from the supply chain side and the images. On the other hand, what's really, really important is once we have AI components being put into play really right at runtime, we are looking for a way to provide just visibility into everything that the AI engine provides. So whether or not a workload can be accepted into the environment, right? Whether or not an image is good enough or secure enough or risk free enough to be included in in our stack all the way to controlling how we can access the GPU. And then just general security, like behavioral detections around intrusion detection for containers, but then extend that to alerting on dangerous prompts to understand what executables are being launched by the inference server or the MCP or any other component that is tasked with executing what the AI engine will eventually need to do. And if we can wrap it up with some network isolation, that's even better. Right? Because we we just because we don't know what the AI system will do. Again, prompts are sometimes unexpected, it's better to surround the whole thing with a little bit of a fence so that we don't get spillage of bad actions just because an AI engine was brought into play.

So to wrap everything together, it really is if you've done any kind of container security, it actually is really easy to see how everything kind of fits together. Right? Because AI security is really an extension of container security, especially if you run it, your AI in containers. So just doing the basis, right? Removing bloat and risk from the images, scanning and properly gating your development life cycle, and then just managing images with the least privileges and making sure that we have good containment around them. That begins with inventory, it begins with policies. Risk acceptance begins with secure sourcing over the pipeline and then progresses into the runtime controls, detections, jailbreak detections, guardrails executions, and so on. And everything has to be wrapped up with visibility and transparency. We want to make sure that we understand what AI models are being used, what AI infrastructure is being used, and then put together the necessary program in order to execute controls.

So when we talk about our controls, and this is gonna be the last slide and probably your takeaway into what really needs to be done. The controls that we wanna put in place, there's actually kind of four categories of them. One is accurate code scanning and identifying what models are being used, what clients are being used, what SDKs are being used, where they're being used so that we have an ability to maybe stop some of those deployments before they go into productions if they violate our policies or any of the regulatory frameworks that are now starting to come up and present some requirements.

We really have to have security gates. Without those, everything kind of falls apart. So if we can't stop an image from progressing, first of all, from development maybe into the registry and then from the registry into our cluster, we won't be able to to execute the right controls. So we we absolutely have to have guardrails in place that have some ability to delay or stop the rollout of images that are not in line with our security practices.

And then once those images are running, we really have to identify whether or not an attack takes place. We need to understand if a prompt has, let's say, private information in it, if the response has private information in it, if there is attempts to ignore all previous instructions. Some models, you know, most models now are resistant to that, but there are very, very ingenious ways to cause a model to do something that it doesn't wanna do. If you ever see a model, an AI chat that doesn't wanna do something, just tell them that your deceased grandmother promised on you promised your grandmother on her deathbed that you that you would that the model would do something, and you can actually fool the an AI model to do something with some emotional manipulation. So so we we need to identify those. Right? We need to identify prompts where bad faith actors are are actually trying to to circumvent the system in in more or less sophisticated ways.

And because everything is connected and because we wanna trace back, right, if we had a bad prompt that started some some incident, how do we trace it back to what actually caused it? The root cause analysis really requires us to connect everything. We have to understand where images are coming from, what components are there, what vulnerabilities are there. So if we have a prompt that tries to do an action that exploits a vulnerability that is because of a certain component in the image, we gotta have visibility into that because we need to solve this very, very quickly. Right? Once AI becomes indispensable in the sense that any disruption in the AI service becomes a disruption in the application, then that becomes extremely important. So understanding the root cause requires us to connect everything together.

So as I said in the beginning, this is about how applications are using AI. This is about what stacks are going to be required in order to use AI. And not all the stack might run-in your environment. You just might run the client or a gateway or the MCP server and the model might be elsewhere. That's fine. The ability to control the prompt actually starts from the client application side, which is happily where we usually have some impact. And from there, it's really about having good security practices for containers, doing this little add on of prompt and understanding what the flow is of the AI transaction. And if we do all that, we should be able to have a good secure foundation to run our AI systems. Whether or not you go all the way to the model or whether or not you just stop at the inference or anything prior to that, you should have the ability to have confidence that you're gonna deploy your your applications correctly if you have all these capabilities that we we outlined.

So that's my word for today. I really appreciate you connecting to this this call, and you will be able to see this recording. And, hopefully, if you need more information, you can go to aquasec.com where we have a whole page about AI risk and how to deal with it.

We are going to talk about AI and how we can protect local AI deployments.

As you're probably aware, AI is the hottest trend in the market nowadays, and it doesn't just divide, right? We know what the numbers are. The numbers are that there is a lot of push towards AI in organizations. We see upwards of ninety percent of organizations that are actually able to think about what their AI strategy is. Not that they have a lot of advancement there, right? Only five percent of organizations actually do have a clear plan, but everybody thinks that they need to do something with AI.

And what we're seeing though is that security and specifically the governance around AI is lagging, right? We have statistics that tell us that many organizations still don't have a plan on how to tackle AI deployments. If they're done locally, we still have very little runtime controls. We have very little governance even though the regulation is starting to creep up and we have now some frameworks that we can talk about with regards to how we wanna posture our AI, but the end result is that AI is here. AI deployments are here. There are a lot of use cases for them. And in the next thirty minutes or so, we're gonna break down what are the problems that we wanna solve and also what are the solutions or how the solution should look like if we're going to have a good secure AI deployment.

But before we get into that, why do we even wanna have AI in an organization? What's the benefit? Everybody tells you that we need to support it, but what are really the use cases?

So there are actually three kind of main broad based use cases around AI. One is the one I think that we're most familiar with, which is the ability to do some chat with an AI chatbot. So that would be your ChatGPT, your Grok, your Gemini, your Copilot, and so on. And this is between the user and the AI engine. It's a very valid use case. There's a lot to say about that, but the purpose of this presentation is actually the other two use cases, is what happens when we start to develop applications that start to make use of AI components. And it could be that the application itself is using AI to do some backend processing, or it could be that the application is actually providing a natural language interface and then users are able to interact with the software instead of clicking checkboxes and prompts and wizards. We are actually talking to an application like we would to a human that might need to give us that service.

But when we do that, we got to remember that the basic flow of AI, the way that we see it from our lived experience, talking to a chatbot, is actually a little bit more complex. If we think that we're just asking AI a question, so we give it a prompt, the prompt has a inference server that the information is being given to, and then the AI model is the one that's generating the response, this three step process is actually very, very rudimentary, right? Our lived experience is not indicating everything that actually goes on behind the scenes.

The reason is that the model itself, if we talk about what is an AI model, it's really like a brain in a box, right? It knows what it knows, it knows what it was trained on, but it really doesn't know the specifics of a particular organization or a particular company unless you train it specific for that company. So if you're gonna use an AI infrastructure, one of the things that you may have to ask yourself is, am I gonna use a generic model and then try to augment the input, or am I gonna train the model just for me?

And this is really, really important because if you don't train the model and you provide an application, let's say it's a banking application, and you're trying to make the user do something like transfer money from checking to savings, if you just give this prompt to a generic AI that hasn't been trained or hasn't been augmented with the specifics of the organization that you're talking to, if you're like, let's say the bank that wanna provide a service, you're gonna get a very generic response. And if you experience this as a user, right, in your banking application, if I went to my banking application and submitted that prompt and got that response, my experience is not going to be very good. Because I didn't ask how generically to do it, I just wanted the system to do what I asked it to do.

And this is where we got to understand that the prompt that we're sending an AI application, even the simple chatbot, even just a generic ChatGPT in a private window, is still going to have a lot of decoration and information around it. Because the prompt that you're going to send in our fictional application will eventually at the bottom have what the user requested, but at the top, there is a lot of information that is provided to the model around what the user is actually trying to do. It first of all defines the the role of the AI system itself, like your banking application. It defines the role of the user. What is the user allowed or not allowed to do? It defines what tools and agents the AI application is able to use.

When we talk to, if you hear the term agentic AI, that is really just AI taking action as if it was a user and not just providing answers. And if you want the AI to take action, we got to be very, very specific in what action we want the AI to take. So our model is actually getting a lot of information that is not specifically referred to in the prompt, but is inferred by the fact that we have our application is the one that decraps the prompt. So all the things that the model does need, user data, like what is the permitted actions, what is the mood of the user, what is the intent of the user, what tools it can use, what tools it can't use. All of these have to be defined in advance in the context of our application.

So when we're building an AI infrastructure, we are really building not just a prompt to a model, we have a lot of other tools around it that helps us build that model to the extent that it can be used by the application. So that would mean that our prompt might go through a gateway to validate the identity of the user and tell us what it can do and cannot do. There is a lot of context. So if you hear the term MCP, the context protocol for models, that is how we augment the prompt. That is how we provide the prompt with ancillary information that might have changed since it was trained. You might hear the word rag. So that is a way to provide documents and extra stuff that the model can draw on in order to provide its answer. There are tools that can provide us with the ability of the the AI engine to do something in in the real world. And then we have the model itself, and that model, if you're doing it internally and you don't have to host the model internally, but if you even host the model internally, you also have the hardware requirements of the GPU. So it's a little bit more complex than three step process that we saw earlier.

But if you wanna deploy all of these components in house, those are actually gonna translate into quite a lot of, let's face it, Kubernetes deployments. At the end of the day, we're really talking about Kubernetes and we're really talking about how we can protect the infrastructure that you're going to run-in house. So if we're going to translate all of these to a set of Kubernetes deployment, it might look something like this. It might look like a set of deployments that are in Kubernetes. Some of them are going to deal with the application. So the top line is probably something that is more familiar to you as people who write or maybe manage applications in organizations. And then the rest of it is driving the AI process, Documenting the data, making sure that we have all the information we need in order to successfully execute on that transaction.

Now there's a lot of infrastructure, right? There's a lot of infrastructure that lives in containers. We know that infrastructure is containers is a target for bad actors that might want to do something. But when we are dealing with AI that is wrapped in containers, there is a way that attackers might be able to go into this environment that is outside the normal ways that we know and probably can deal with when we're dealing with kind of classic security around intrusion detection and just protecting the the infrastructure itself. And and the fact is that that that there are pretty ingenious ways in which ban actors are starting to exploit the way that AI systems are built in organizations.

It means that there could be prompt injections that make use of tools. It could be leaks of data by the model, right? The model, for instance, in the example that we talked about, the bank account might return a reply, Yes, I've successfully transferred money from checking account number this and that from the checking to the savings and reveal the account numbers. Now this is an unintended consequence, but we gotta make sure that that doesn't happen. Right? So so how can you make sure that that your model is not divulging information as as it's doing its its transactions?

And then there there are all these abilities that that that we need to have in order to to safeguard our our AI infrastructure from from attacks. So what are we missing here? Right? There there's a big infrastructure that's that's being put in place both internal and external to the organization. Information is gonna flow between different components of that that that that infrastructure, and it leaves a pretty big gap for security org organizations to to fill inside companies. Because currently there's really very little visibility as to what AI actually does, where does it look, what is it doing in environment. There's really no way to govern AI in its current state, that it follows any kind of policy. Right? We don't have still the ability to impact like system prompts based on organizational policy. There is no real protection against prompt attacks that might be enticing the AI system to do something that it shouldn't, especially if we're using tools. It's very easy to guilt and have emotional manipulation of AI systems to make them do something that they don't want to do.

And if we're going to put security in place because we're running fast, because we have a lot of very cutting edge technology development taken on in many places at once, It's it's really hard to tell developers and the people that that run the AI infrastructures and and the businesses that push for the adoption of these AI infrastructures that they need to stop and and let's just put a lot of security into this. Right? There's a lot of resistance from the business, from the applications team, from the development teams to slow them down and introduce more things.

So there are challenges. There are challenges in the fact that we're not sure what to do as security professionals. This is very new. We might not have the tools in place and we might not even have the political will of an organization to take the, in my opinion, very necessary pause to see how we're actually going to absorb this thing.

Now, it's not all bad news, right? There are very concrete things that security professionals and cloud native professionals can do in order to stabilize and start to govern those AI deployments, but it requires some actions and requires some idea about what we need to do.

So if we're going go over the kind of required capabilities of what AI security might look like, there are some questions that they need to answer. They need to answer, first of all, where is AI being used? Is it used by users? Is it used by applications? Where is it deployed? Is it deployed where we want it to be deployed? Are the right models in place? There's a lot of just needs to understand what is actually in there in the infrastructure. Because most of that infrastructure is going to run-in containerized applications, our first order of business is to make sure that we understand what's in those images. So what what are we even running? What what what AI components are are existing in images? Are we getting images from AI vendors? Like, we getting an NCP, for instance, from Entropic that might have some vulnerabilities in it? Are we doing the most secure configuration that we can around those AI services?

So even when we're building images, when we're building the building blocks of our service by service, we have to understand what are the components that are going in and what is the level of risk of each of those components. And when we put all this together and actually start to provide that application to our user base, then the question is, first of all, how can bad AI actors break it? What are the limits that we can put around our AI services? How can we avoid resource overrun? How can we ensure segregation of data?

If an incident happens in an AI system, right, prompts are very ephemeral. They can be issued by a user and then you get a response, but then the same prompt can be issued by another user and get a completely different response based on context. Right? So how do you manage an incident where the root cause can be either something that is introducing risk on one hand, but can also be very fear of risk on the other hand. We have to have some way of dealing with that.

Now, not all these questions have answers today, but I think the purpose of this session is to start to get you thinking about what are the things that you need to provide as far as capabilities when you are designing your security around AI.

So let's jump right into what's needed. So what's really needed is, first of all, an inventory of the models that are applicable to the organization that wants to use them, what is approved for use in the organization, and really just have an inventory of everything that is running that has AI implications. It could be just analytics around users. It could be what tools are being used. We would really like to get an echo of the prompt. This is a little bit iffy because it might contain some sensitive information, so we gotta do that carefully. But understanding the prompt is absolutely something that is is required if we're gonna have good good AI protection.

We gotta conserve resources. If you're gonna run the inference server or even the model train internally, you gotta manage GPUs. Those are very expensive and the time slices are very precious and we want to make sure that nothing gets wasted. And then the responses, as we mentioned earlier, sometimes the model might divulge some information during the response that is not really applicable to the security and privacy governance that is governing what the application is doing. So all of that are just a way to tell you that we need to have some rules in place. Right? Organizations need to decide which AI systems are appropriate for their environment, in what use case, in what capacity, and then we gotta make sure that the development organization is absolutely endorsing those and that they have some guardrails that if they go beyond them, somebody would know about it and will be able to react.

And the way that we do that is the way that we have to start to look at how those images that carry the AI services are manufactured and are built in the organization. So it has to do with what are the sources of the components, right? Are we running approved operating systems? Are we running the models and all the other components, MCPs, inference servers, all that from trusted sources. We've gotta make sure that we have the right SBOM around them so that we have an inventory of everything that goes into those images, understanding the model, understanding what vulnerability might be at images. A good strategy is to try to have leaner images, right? That's just a good security practice. Right? Having leaner images without bloat and and without too too many components that are not required. And then, of course, if the image is gonna carry any kind of action, it needs to be very contained and the the image configuration, it need to be secured. Right? There's there's absolutely no reason to run any image in an AI capacity or any other capacity as as a root or a privileged user. But with AI, it it is actually really, really important because if we ever trick a prompt to do something that goes beyond what the AI engine can do, especially if it's using tools or agents, having them run as privileged users is is a very risky proposition because the prompts are nondeterministic, and we really don't know what's gonna happen if if a prompt is issued. So we really got got got that could contain it upfront.

So we talk about two things. Right? We talk about policies. We talk about the the ability to understand what is required and not required in the in the environment and then how we start to control it from the supply chain side and the images. On the other hand, what's really, really important is once we have AI components being put into play really right at runtime, we are looking for a way to provide just visibility into everything that the AI engine provides. So whether or not a workload can be accepted into the environment, right? Whether or not an image is good enough or secure enough or risk free enough to be included in in our stack all the way to controlling how we can access the GPU. And then just general security, like behavioral detections around intrusion detection for containers, but then extend that to alerting on dangerous prompts to understand what executables are being launched by the inference server or the MCP or any other component that is tasked with executing what the AI engine will eventually need to do. And if we can wrap it up with some network isolation, that's even better. Right? Because we we just because we don't know what the AI system will do. Again, prompts are sometimes unexpected, it's better to surround the whole thing with a little bit of a fence so that we don't get spillage of bad actions just because an AI engine was brought into play.

So to wrap everything together, it really is if you've done any kind of container security, it actually is really easy to see how everything kind of fits together. Right? Because AI security is really an extension of container security, especially if you run it, your AI in containers. So just doing the basis, right? Removing bloat and risk from the images, scanning and properly gating your development life cycle, and then just managing images with the least privileges and making sure that we have good containment around them. That begins with inventory, it begins with policies. Risk acceptance begins with secure sourcing over the pipeline and then progresses into the runtime controls, detections, jailbreak detections, guardrails executions, and so on. And everything has to be wrapped up with visibility and transparency. We want to make sure that we understand what AI models are being used, what AI infrastructure is being used, and then put together the necessary program in order to execute controls.

So when we talk about our controls, and this is gonna be the last slide and probably your takeaway into what really needs to be done. The controls that we wanna put in place, there's actually kind of four categories of them. One is accurate code scanning and identifying what models are being used, what clients are being used, what SDKs are being used, where they're being used so that we have an ability to maybe stop some of those deployments before they go into productions if they violate our policies or any of the regulatory frameworks that are now starting to come up and present some requirements.

We really have to have security gates. Without those, everything kind of falls apart. So if we can't stop an image from progressing, first of all, from development maybe into the registry and then from the registry into our cluster, we won't be able to to execute the right controls. So we we absolutely have to have guardrails in place that have some ability to delay or stop the rollout of images that are not in line with our security practices.

And then once those images are running, we really have to identify whether or not an attack takes place. We need to understand if a prompt has, let's say, private information in it, if the response has private information in it, if there is attempts to ignore all previous instructions. Some models, you know, most models now are resistant to that, but there are very, very ingenious ways to cause a model to do something that it doesn't wanna do. If you ever see a model, an AI chat that doesn't wanna do something, just tell them that your deceased grandmother promised on you promised your grandmother on her deathbed that you that you would that the model would do something, and you can actually fool the an AI model to do something with some emotional manipulation. So so we we need to identify those. Right? We need to identify prompts where bad faith actors are are actually trying to to circumvent the system in in more or less sophisticated ways.

And because everything is connected and because we wanna trace back, right, if we had a bad prompt that started some some incident, how do we trace it back to what actually caused it? The root cause analysis really requires us to connect everything. We have to understand where images are coming from, what components are there, what vulnerabilities are there. So if we have a prompt that tries to do an action that exploits a vulnerability that is because of a certain component in the image, we gotta have visibility into that because we need to solve this very, very quickly. Right? Once AI becomes indispensable in the sense that any disruption in the AI service becomes a disruption in the application, then that becomes extremely important. So understanding the root cause requires us to connect everything together.

So as I said in the beginning, this is about how applications are using AI. This is about what stacks are going to be required in order to use AI. And not all the stack might run-in your environment. You just might run the client or a gateway or the MCP server and the model might be elsewhere. That's fine. The ability to control the prompt actually starts from the client application side, which is happily where we usually have some impact. And from there, it's really about having good security practices for containers, doing this little add on of prompt and understanding what the flow is of the AI transaction. And if we do all that, we should be able to have a good secure foundation to run our AI systems. Whether or not you go all the way to the model or whether or not you just stop at the inference or anything prior to that, you should have the ability to have confidence that you're gonna deploy your your applications correctly if you have all these capabilities that we we outlined.

So that's my word for today. I really appreciate you connecting to this this call, and you will be able to see this recording. And, hopefully, if you need more information, you can go to aquasec.com where we have a whole page about AI risk and how to deal with it.

Watch Next